AI technology has become so advanced, that some results are “too dangerous to make public.” This is according to OpenAI, in regards to a recent system that the research institute has built.

Too dangerous to make public? I’m already intrigued.

AI Technology

According to Wired, OpenAI, which is co-founded by none-other than brainiac Elon Musk and startup backer Sam Altman, has developed an AI system that’s so good at its job, it would be dangerous if let loose on the public.

The idea of AI was to used for the common good. Not to be a threat to society. But:

“Now, the institute’s researchers are sufficiently worried by something they built that they won’t release it to the public.”

Dangerous AI Technology

The AI in question was designed to learn the patterns of language. It did this so well that it scored far better on reading-comprehension tests than any other AI system. However, the advanced automated system became a problem when the researchers configured it to generate text of its own.

The text that it came back with “looks pretty darn real” according to David Luan, Vice President of Engineering at OpenAI.

Fake News

The issue with this is what happens if the bot generates fake news that reads as if it were real. Luan continues:

“It could be that someone who has malicious intent would be able to generate high-quality fake news.”

Concern for this potential is so great that the institute made the bold decision not to release the full model or the pages of text used to train it.

>> Airbus Ends Production of ‘Superjumbo’ A380 and Releases 2018 Results

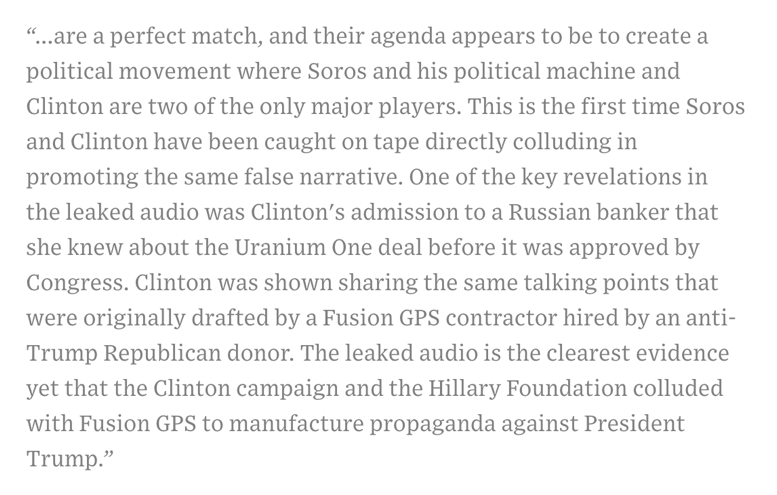

According to Wired, the team was allowed to play with the technology. When asked to elaborate on the phrase ‘Hillary Clinton and George Soros,’ the image below shows what the system is capable of:

Increased Concern

OpenAI is not the only body hesitant to release advanced AI technology. There has been increased concern over the progress of AI and its potential to harm rather than help. Closer to home examples include Amazon’s Alexa sending private conversations to a random contact, and a man in Germany who obtained someone else’s records along with his own when he asked Amazon to release his details.

Featured Image: Twitter